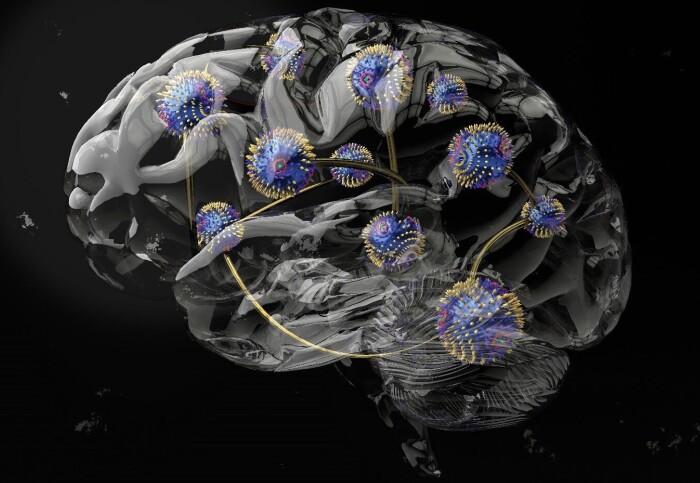

A form of brain-inspired computing that exploits the intrinsic physical properties of a material to reduce energy use is now closer to reality.

Just like our brains

Lead author Dr Oscar Lee (London Centre for Nanotechnology at UCL and UCL Department of Electronic & Electrical Engineering), said: “This work brings us a step closer to realising the full potential of physical reservoirs to create computers that not only require significantly less energy, but also adapt their computational properties to perform optimally across various tasks, just like our brains.

“The next step is to identify materials and device architectures that are commercially viable and scalable.”

Traditional computing consumes large amounts of electricity. This is partly because it has separate units for data storage and processing, meaning information has to be shuffled constantly between the two, wasting energy and producing heat. This is particularly a problem for machine learning, which requires vast datasets for processing. Training one large AI model can generate hundreds of tonnes of carbon dioxide.

Physical reservoir computing is one of several neuromorphic (or brain-inspired) approaches that aims to remove the need for distinct memory and processing units, facilitating more efficient ways to process data. In addition to being a more sustainable alternative to conventional computing, physical reservoir computing could be integrated into existing circuitry to provide additional capabilities that are also energy efficient.

Powering unconventional computing

In the study, involving researchers in Japan and Germany, the team used a vector network analyser to determine the energy absorption of chiral magnets at different magnetic field strengths and temperatures ranging from -269 °C to room temperature.

They found that different magnetic phases of chiral magnets excelled at different types of computing tasks. The skyrmion phase, where magnetised particles are swirling in a vortex-like pattern, had a potent memory capacity apt for forecasting tasks. The conical phase, meanwhile, had little memory, but its non-linearity was ideal for transformation tasks and classification – for instance, identifying if an animal is a cat or dog.

Co-author Dr Jack Gartside, from the Department of Physics at Imperial, said: “Our collaborators at UCL in the group of Professor Hidekazu Kurebayashi recently identified a promising set of materials for powering unconventional computing. These materials are special as they can support an especially rich and varied range of magnetic textures.

"Working with the lead author Dr Oscar Lee, the Imperial College London group [led by Dr Gartside, Kilian Stenning and Professor Will Branford] designed a neuromorphic computing architecture to leverage the complex material properties to match the demands of a diverse set of challenging tasks. This gave great results, and showed how reconfiguring physical phases can directly tailor neuromorphic computing performance."

The work also involved researchers at the University of Tokyo and Technische Universität München and was supported by the Leverhulme Trust, Engineering and Physical Sciences Research Council (EPSRC), Imperial College London President’s Excellence Fund for Frontier Research, Royal Academy of Engineering, the Japan Science and Technology Agency, Katsu Research Encouragement Award, Asahi Glass Foundation, and the DFG (German Research Foundation).